Breaking news

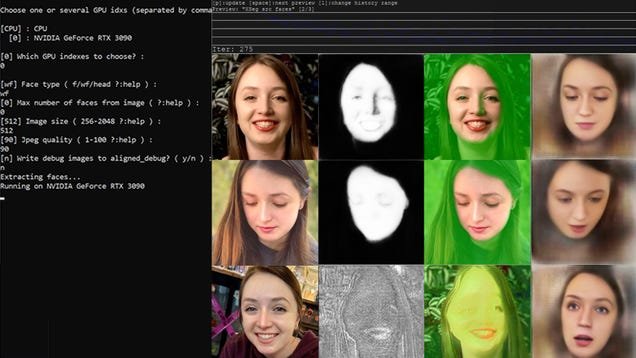

Anybody who’s spent a moment looking out at US political chatter in most modern months has per chance encountered the next prediction: 2024 will yield the world’s first deepfake election. All of a sudden evolving AI video and audio turbines powered by big language gadgets private already been conventional by each Donald Trump and Ron DeSantis’ presidential campaigns to smear every diverse and fakes of most modern President Joe Biden appear to proliferate recurrently. Worried lawmakers—per chance petrified their faces may perchance perchance well also additionally rapidly wind up sucked into the AI-generated quagmire—private rushed to propose more than a dozen funds making an attempt to reign in deepfakes on the articulate and federal ranges.

Bruce Willis is Keeping His Face

But fingernail-chewing lawmakers are unhurried to the occasion. Deepfakes focusing on politicians may perchance perchance well also appear contemporary, however AI-generated pornography, which silent makes up the overwhelming majority of nonconsensual deepfakes, has tormented hundreds of ladies folk for over half of a decade, their stories often buried under the bottom of mainstream concerns. A neighborhood of deepfake victims are attempting to know that veil by recounting their trauma, and the steps they’ve taken to fight support in opposition to their aggressors, in a surprising contemporary documentary known as One more Physique.

One of these ladies folk targeted, a unique ASMR streamer with 280,000 followers on Twitch named Gibi, spoke with Gizmodo about her resolution to publicly acknowledge the sexual deepfakes made of her. She hopes her platform can abet shine a spotlight on the too-often overpassed disaster.

“I suspect we consume most of our time convincing ourselves that it’s no longer a gigantic deal and that there are worse things on the earth,” Gibi acknowledged in an interview with Gizmodo. “That’s form of how I obtain by with my day, is staunch you can’t let it bother you, so that you convince yourself that it’s no longer that sinful.”

“Hearing from diverse folk that it is that sinful is a mix of feelings,” she added. “It’s fairly bit relieving and additionally fairly bit provoking.”

Gibi turned into one of a number of ladies folk in the movie who present their experiences after finding deepfakes of themselves. The documentary, directed by filmmakers Sophie Compton and Reuben Hamlyn, largely follows the lifestyles of an engineering college pupil named Taylor who figured out deepfake pornography of herself circulating on-line. Taylor isn’t the pupil’s exact identify. If fact be told, all appearances of Taylor and but every other deepfake sufferer presented in the documentary are surely deepfake videos created to conceal their upright identities.

The 22-year-ancient pupil discovers the deepfake after receiving a chilling Fb message from a chum who says, “I’m in actuality sorry however I suspect you will must eye this.” A PornHub hyperlink follows.

Taylor doesn’t imagine the message in the origin and wonders if her ultimate friend’s yarn turned into hacked. She in the fracture decides to click on on the hyperlink and is presented along with her face engaged in hardcover pornography staring support at her. Taylor later learns that any individual pulled photos of her face from her social media accounts and ran them by means of an AI model to originate her appear in six deepfaked intercourse videos. Making issues worse, the offender at the support of the videos posted them on a PornHub profile impersonating her identify along with her exact college and fatherland listed.

The, every so often, devastatingly horrific movie lays bare the trauma and helplessness victims of deepfakes are compelled to undergo when presented with sexualized depictions of themselves. While most conversations and media depicting deepfakes care for celebrities or excessive-profile folk in the general public eye, One more Physique illustrates a troubling actuality: Deepfake technology powered by increasingly more worthy and simple-to-access big language gadgets formulation each person’s face is up for grabs, regardless of their status.

In place of pause on a grim impress, the movie spends the bulk of its time following Taylor as she unravels clues about her deepfakes. At last, she learns of but every other woman in her college targeted by equal deepfakes without her consent. The two then dive deep into 4Chan and diverse hotbeds of deepfake depravity to witness any clues they are able to to unmask their tormentor. It’s one day of that descent in the depth of the deepfake underground that Taylor stumbles all over faked photos of Gibi, the Twitch streamer.

Twitch streamer speaks out

Gibi, talking with Gizmodo, acknowledged she’s been on the receiving discontinue of so many deepfake videos at this point she can’t even recall when she observed the first one.

“It all staunch melds collectively,” she acknowledged.

As a streamer, Gibi has lengthy faced a slew of harassment origin with sexualized textual express material messages and the more-than-occasional dick pic. Deepfakes, she acknowledged, had been gradually added into the mix because the technology evolved.

Within the origin, she says, the fakes weren’t all that refined however the quality fleet evolved and “started taking a stumble on increasingly more exact.”

But even obviously faked videos silent prepare to idiot some. Gibi says she turned into amazed when she heard of folk she knew falling for the vulgar, impulsively thrown-collectively early photos of her. In some situations, the streamer says she’s heard of advertisers severing ties with diverse creators altogether because they believed they had been enticing in pornography once they weren’t.

“She turned into cherish, ‘That’s no longer me,’” Gibi acknowledged of her ultimate friend who misplaced advertiser enhance resulting from a deepfake.

Gibi says her interactions with Taylor partially inspired her to release a YouTube video titled “Talking out in opposition to deep fakes” the place she unfolded about her experiences on the receiving discontinue of AI-generated manipulated media. The video, posted last year, has since attracted almost half of a million views.

“Talking about it staunch meant that it turned into going to be more eyes on it and be giving it a much bigger target market,” Gibi acknowledged. “I knew that my power lay more in the general public sphere, posting on-line and talking about hard issues and being unbiased, noteworthy work.”

When Gibi decided to begin up about the disaster she says she in the origin steer clear off studying the feedback, no longer gleaming how folk would react. Fortunately, the responses had been overwhelmingly sure. Now, she hopes her involvement in the documentary can plot in even more eyeballs to capability legislative solutions to prevent or punish sexual deepfakes, a disaster that’s taken a backseat to political deepfake guidelines in most modern months. Talking with Gizmodo, Gibi acknowledged she turned into optimistic about the general public’s renewed interest in deepfakes however expressed some annoyance that the brightened spotlight simplest arrived after the disaster started impacting more male-dominated areas.

“Men are each the offenders and the patrons after which additionally the folk that we surely feel cherish we must allure to trade the relaxation,” Gibi acknowledged. “So that’s disturbing.”

These frustrations had been echoed by EndTAB founder Adam Dodge, who additionally makes a number of appearances in One more Physique. An criminal professional working in gender-basically based completely violence for 15 years, Dodge acknowledged he founded EndTab in reveal to empower sufferer service suppliers and educate leaders about the threats posed by technology conventional to pause harassment. Taylor, the college pupil featured in the movie, reached out to Dodge for recommendation after she figured out her have deepfakes.

Talking with Gizmodo, Dodge acknowledged it’s major to acknowledge that on-line harassment isn’t in actuality contemporary. AI and diverse rising technologies are merely amplifying an present notify.

“Of us had been the usage of nude photos of victims to harass or exert power and sustain an eye on over them or humiliate them for a truly lengthy time,” Dodge acknowledged. “That is staunch a recent map that folk are ready to pause it.”

Deepfakes private altered the equation, Dodge notes, in a single wanted map. Victims no longer must private intimate photos of themselves on-line to be targeted. Simply having publicly available photos on Instagram or a college online page are ample.

“We’re all capability victims now because all they need is a picture of our face,” Dodge acknowledged.

Even though his organization is basically supposed for coaching functions, Dodge says victims would peek him out seeking abet because he turned into one of the few folk making an attempt to know awareness about the harms early on. That’s how he met Taylor.

Talking with Gizmodo, Dodge expressed equal frustrations with the scope of some rising deepfake guidelines. Even though the overwhelming majority of deepfakes posted on-line involve nonconsensual pornography of ladies folk, Dodge estimates around half of of the funds he’s considered proposed focal point as an substitute on election integrity

“I suspect that’s because violence in opposition to ladies folk is a disaster that is rarely any longer often given upright attention, is consistently subverted in prefer of diverse narratives, and legislators and politicians had been engaging on deepfake misinformation that may map the political sphere because it’s a long way a disaster that is affecting them in my realizing,” he acknowledged. “If fact be told, what we’re talking about is a privilege disaster.”

Deepfakes are spirited the rep

Sexual deepfakes are proliferating at an astonishing clip. An just researcher talking with Wired this week estimates some 244,625 videos had been uploaded to the tip 35 deepfake porn web sites over the last seven years. Almost half of, (113,000) of these videos had been uploaded one day of the first 9 months of this year. Riding dwelling the purpose, the researcher estimates more deepfaked videos will possible be uploaded by the discontinue of 2023 than all diverse years blended. That doesn’t even encompass diverse deepfakes that may perchance perchance well also exist on social media or in a creator’s interior most collections.

“There turned into major development in the offer of AI instruments for rising deepfake nonconsensual pornographic imagery, and an expand in quiz for this form of express material on pornography platforms and illicit on-line networks,” Monash University Affiliate Professor Asher Flynn acknowledged in an interview with Wired. “That is simplest susceptible to expand with contemporary generative AI instruments.”

Dejecting as all of that may perchance perchance well also sound, lawmakers are actively working to search out capability solutions. Spherical half of a dozen states private already handed guidelines criminalizing the introduction and sharing of sexualized deepfakes without an particular particular person’s consent. In Unusual York, a unbiased no longer too lengthy previously handed law making it illegal to disseminate or spin sexually reveal photos of any individual generated by synthetic intelligence takes pause in December. Violators of the law may perchance perchance well face up to a year in penal complex.

“My bill sends a solid message that Unusual York obtained’t tolerate this form of abuse,” articulate senator Michelle Hinchey, the bill’s writer, unbiased no longer too lengthy previously told Hudson Valley One, “Victims will rightfully obtain their day in court.”

In diverse locations, lawmakers on the federal level are pressuring AI corporations to acquire digital watermarks that may clearly reveal to the general public when media has been altered the usage of their applications. Some major corporations smitten by the AI lope, cherish OpenAI, Microsoft, and Google, private voluntarily agreed to work in the direction of a clear watermarking arrangement. Mute, Dodge says detection efforts and watermarking simplest tackle so noteworthy. Pornographic deepfakes, he notes, are devastatingly rotten and obtain lasting trauma even when each person knows they are fraudulent.

Even with nonconsensual deepfakes poised to skyrocket in the come future, Dodge remains shockingly and reassuringly optimistic. Lawmakers, he acknowledged, appear engaging to study from their previous mistakes.

“I silent think we’re very early and we’re seeing it obtain legislated. We’re seeing folk focus on it,” Dodge acknowledged. “Unlike with social media being around for a decade and [lawmakers] no longer in actuality doing ample to protect folk from harassment and abuse on their platform, here is an situation the place folk are gorgeous attracted to addressing all of it over all platforms and whether legislative law enforcement, tech in society at big.”